For the Dutch version, click here. They are two companies you don't often think about, but they are essential to your daily life. Every time you

Frank Kopers, Editor-in-Chief of Marketupdate, explains in this article the concept of high frequency trading and hereby discusses the implications for both the market and private and institutional investors.

Probably all investors today are aware of high frequency trading, computerized trading using fast computers which are programmed to buy and sell securities in milliseconds. These computers operate on very advanced algorithms, which can take advantage of small inefficiencies on the financial markets. Computers are able to process new data way faster than human investors, giving them a substantial and profitable speed advantage.

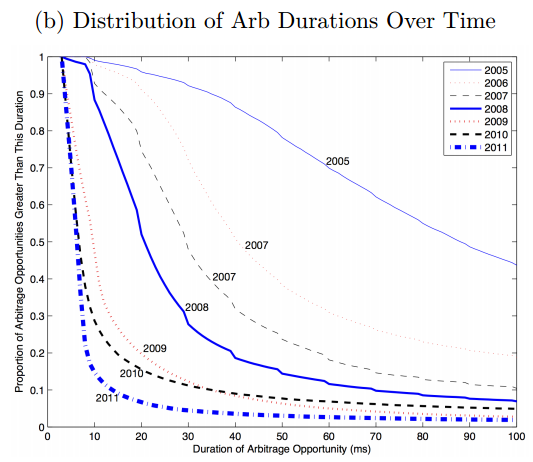

As a result of this, arbitrage became much faster and efficient year after year. The bid-ask spread for example (the difference between the prices at which investors are willing to buy and sell) decreased substantially thanks to high frequency trading. In just twenty years the bid-ask spread narrowed down from about 90 to just 3 basis points, which means there is much less overhead now then there was back then. Computerized trading also improved the arbitrage capabilities of the financial markets, according to a paper published in 2013 titled “The High-Frequency Trading Arms Race: Frequent Batch Auctions as a Market Design Response”. In this paper, the researchers looked at the differences between an ETF and a futures contract based on the same S&P 500 index between 2005 and 2011. After analyzing the data, they found out that arbitrage took 97 milliseconds in 2005, compared to just 7 milliseconds in 2011.

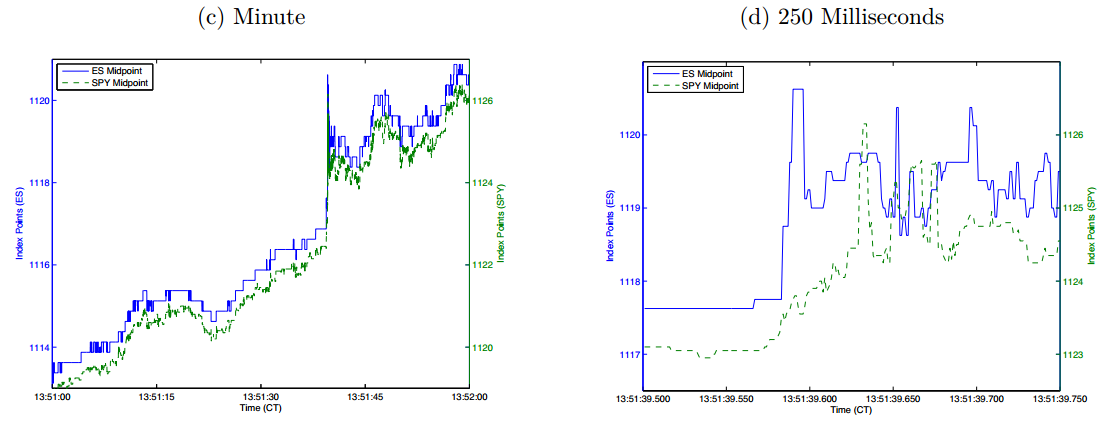

High frequency trading works on a very short time horizon, one that is invisible for the eyes of investors. While human investors can only see the stock market movements from second to second, the computerized trading takes place on a completely different playing field within a second. The following graph shows the difference between what investor see on their computer screen and what high frequency trading computers see. The graph on the left shows the movement of an exchange traded fund and a future based on the S&P 500 index within one minute, while the graph on the right shows the price of the same securities within just 250 milliseconds. As you can see both the exchange traded fund and the future track the stock market closely in the long run, while they do fluctuate over a time horizon of just milliseconds. Those small fluctuations is where high-frequency trading can arbitrage for a profit.

Race to the bottom

High frequency trading has the highest profitability if your computers can front-run those of your competitor. Computers which can process new information faster and buy and sell in and out of positions faster make more profits. This is a race to the bottom, shaving off milliseconds using faster hardware, smarter algorithms and better networking technologies. The following graphs shows the result of this race to the bottom.

Liquidity evaporates

High frequency trading makes the financial markets more efficient, you might think. But this is not the complete story. Computerized trading can also disrupt the market, for example when algorithms get out of control. Back in May 2010, the Dow Jones index lost more than a thousand points in just a couple of minutes, the biggest drop ever witnessed on the US stock market. After a sharp drop, the same computers started buying again until prices were back at the level they were before the flash crash. In a study about the cause and effect of this flash crash, the American supervisor called it a ‘hot potato volume effect’ caused by high frequency trading. One might wonder if such volatility could take place in a market where computers do not represent more than half of the total trading volume…

The speed at which high frequency trading takes place can cause instability as well. It takes time to bring buyers and sellers together and to guarantee market liquidity and everything high frequency tries to accomplish is to remove the time factor. Image a high frequency trading system trying to sell a large amount of securities, without any buyers showing up for a second. So the volume dries up, if you look at it on a millisecond scale.

Going too fast?

So it looks like high frequency trading can be too fast, reducing liquidity and causing volatility in the stock market. Austin Gerig and Daniel Fricke recently published a working paper titled “Too Fast or Too Slow? Determining the Optimal Speed of Financial Markets”. In this article, they collected historical data from US stocks to determine the optimal trading interval for computerized trading. After analyzing the data, they came with an efficient trading interval between 200 and 900 milliseconds. According to their research, trading is most efficient with one to five trades per second, depending on the volatility and the popularity of a specific security. Trading at a higher speed than five times per second appears to be unnecessary at best and harmful at worst.

High frequency trading might be a profitable business for those companies running those systems, but for society at large it doesn’t provide any real productivity gains. All those smart people building high frequency computers do not improve the standard of living of the society as a whole. Instead of using their knowledge to develop models which can be applied in the real economy, they build models to shave a few pennies of the profit of other investors.

It might be a good idea to introduce a trading frequency limit, where transactions are made in fixed batches of 0,1 second. Introducing a speed limit to trading ensures market liquidity and stability, without the harmful effects of trading on the milliseconds and nanoseconds.

Sources:

– Too Fast or Too Slow? Determining the Optimal Speed of Financial Markets (Fricke et al, 2015)

– The High-Frequency Trading Arms Race: Frequent Batch Auctions as a Market Design Response (Budish et al, 2013)

– http://www.bloombergview.com/articles/2015-01-25/high-frequency-traders-need-a-speed-limit

– http://www.businessinsider.com/high-frequency-trading–a-liquidity-hoax-2010-12?IR=T